Impact Factor : 0.548

- NLM ID: 101723284

- OCoLC: 999826537

- LCCN: 2017202541

Walter P Vispoel*, Hyeryung Lee and Tingting Chen

Received: November 10, 2023; Published: November 16, 2023

*Corresponding author: Walter P Vispoel, Department of Psychological and Quantitative Foundations, University of Iowa, USA

DOI: 10.26717/BJSTR.2023.53.008457

In this brief article, we describe methods that can be effectively used to determine the value of reporting subscale in addition to composite scores from assessment measures, illustrate additional techniques to determine the number of items needed to support the viability of subscale scores, and direct readers to resources where relevant formulas and computer code are provided to implement these procedures.

Researchers and practitioners routinely use measures that produce scores at different levels of aggregation. Common examples of such measures include achievement batteries that produce total scores and nested sub-scores for separate subject matter areas (e.g., English, reading, math, science; [1]), ability inventories that produce both total scores and nested sub-scores for different areas of intellectual functioning (e.g., verbal, quantitative, non-verbal; [2]), personality questionnaires that include scores for both global domains (e.g., neuroticism) and more specific subdomain facets nested within each domain (e.g., anxiety, angry hostility, depression, self-consciousness, impulsiveness, vulnerability under neuroticism; [3]), and so forth. An important question often asked about subdomain or subscale scores from such measures is whether they provide useful information or added value beyond the total or composite scores reported for those instruments. To answer this question, measurement specialists have produced a variety of indices to quantify subscale viability. In this article, we illustrate one such procedure first described by Haberman (2008, [4], also see [5-7]) that can encompass a wide variety of measurement paradigms including classical test theory, generalizability theory, item response theory, and factor analytic techniques [8-12].

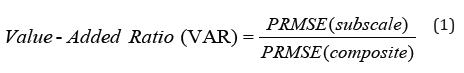

Haberman (2008)’s method is based on computation of indices for a subscale and its associated composite reflecting reduction in measurement-related error when estimating the underlying construct( s) represented by the subscale’s scores. Technically, the indices for the subscale and composite reflect proportional reductions in mean-squared error (PRMSE, [4-12]) when estimating true scores from observed scores. These indices, in turn, can then be used to create a value-added ratio (VAR; see [7]) by dividing the PRMSE for the subscale by the PRMSE for the composite scores as shown in Equation (1). VAR values greater than 1.00 would support reporting subscale in addition to composite scores, and increasingly so as VARs deviate further away from 1.00.

In illustrations to follow, we use data from a study by (Vispoel, et al. [13]) to apply and extend Haberman’s method to the measurement of personality traits. The data consists of responses from 330 college students who completed the recently updated and extended 60-item form of the Big Five Inventory (BFI-2 [14]). The BFI-2 measures five superordinate personality traits (Agreeableness, Conscientiousness, Extraversion, Negative Emotionality, and Open-Mindedness), along with three nested subordinate constructs or facets for each superordinate trait (see (Table 1) for titles for all facet subscales). Within the reported analyses, composites and subscales respectively represent superordinate and subordinate constructs. Composite scales have twelve items, with four items for each of three nested subscales that are equally balanced for positive and negative phrasing. Items are answered using a 5-point Likert-style rating scale (1 = Disagree strongly, 2 = Disagree a little, 3 = Neutral, no opinion, 4 = Agree a little, and 5 = Agree strongly)

The second column in (Table 1) shows VARs for all BFI-2 subscales in their original form with four items per subscale. The results reveal that nine of the fifteen subscale scores (Organization, Assertiveness, Energy Level, Sociability, Depression, Emotional Volatility, Aesthetic Sensitivity, Creative Imagination, and Intellectual Curiosity) provide evidence of added value beyond associated composite scores. Such results would then beg a logical follow-up question concerning how the remaining subscales might be revised to reach the threshold for added value.

A useful way to address this problem is to apply generalizability theory-based prophecy techniques [15-19] to determine the extent to which increases in numbers of items might improve subscale added value (see [8-12] for further details). The remaining columns (3-6) in (Table 1) include estimates of VARs for each subscale when pairs of items are successively added up to a maximum of 12 total items. The results show that the threshold for VARs exceeding 1.00 to support subscale score viability is reached by adding four more items to the Trust, Productiveness, and Anxiety subscales. However, this threshold is not met for the Compassion, Respectfulness, and Responsibility subscales even after adding eight more items, thereby highlighting the redundancy of those subscales with their domain composite scores. To reach the desired threshold for these subscales and avoid inclusion of an excessive number of items, the original items might be revised to overlap less with other subscales within the same global personality domain.

The examples just described illustrate the value of using Haberman’s procedure coupled with generalizability theory prophecy techniques to evaluate the benefits for reporting subscale in addition to composite scores from a popular measure widely used in psychological research. However, these techniques are applicable to any assessment domain and instrument for which both composite and subscale scores are reported. Additional information about Haberman’s methods can be found in [4-12], and formulas and computer code for integrating them with generalizability theory techniques are provided by Vispoel and colleagues [20-23]. We hope that readers will familiarize themselves with these procedures to improve the quality and efficiency of measurement procedures in relevant areas of personal interest.