Impact Factor : 0.548

- NLM ID: 101723284

- OCoLC: 999826537

- LCCN: 2017202541

Jessica J Messersmith*1, Lindsey Jorgensen2, Teresa Wierzba-Bobrowicz2, David Alexander3

Received: June 21, 2018; Published: July 05, 2018

*Corresponding author: Jessica J Messersmith, Department of Communication Sciences and Disorders, University of South Dakota, Vermillion

DOI: 10.26717/BJSTR.2018.06.001345

Purpose: Verification measures of many hearing aids and cochlear implants are commonly conducted at 0-degress azimuth. The purpose of this study was to determine the effect of presentation azimuth on audiometric thresholds and verification of hearing aids (HA) and cochlear implants (CI).

Method: Group 1 was comprised of 20 adult listeners using a HA and CI. Group 2 was comprised of 5 adult listeners using their personal CI and HA. Verification measures were conducted at 0- 45 and 90-degrees presentation aziumuths for each device. For all participants, Real Ear Unconcluded Response (REUR), Real Ear Occluded Response (REOR) and Real Ear to Coupler Difference (RECD) values were obtained. For hearing aids, Real Ear Aided Response (REAR) frequency measures of the ISTS in a sound field environment were obtained in the different azimuth presenations. For CI measures, the same measures were made; additionally aided behavioral threshold measures were obtained at the three azimuths.

Results: Results from the current study indicated that the 45- and 90-degree azimuths have a higher dB SPL frequency response than those measured at 0-degrees. Aided behavioral thresholds were better at 45- and 90- than at 0-degrees.

Conclusion: The findings of this study indicate that head position or movement during verification measures may affect measured performance with hearing aids and cochlear implants. There may be a greater effect on performance during speech measurements.

Keywords: Sound Location; Verification Measures; Hearing Aids; Cochlear Implants; Sound Source Location

It is estimated that in the United States, approximately six million individuals use hearing aids (HA) [1] and approximately 70,000 individuals have received cochlear implants (CI) (National Institute on Deafness and Other Communication Disorders, 2010). During the fitting of the device, HAs and CIs should be verified to ensure they are programmed appropriately for the individual [2]. These verification measures are commonly performed using sound field presentation of standardized stimuli. Although there are no universal standards for performing these sound field measures, there are recommendations from professional groups and verification system manufacturers on procedures for performing these measures. One concern when performing these measures is that outcome measures utilizing sound field testing could be influenced by environmental factors, particularly the presentation azimuth of the stimuli [3]. Presentation azimuth is one factor that may vary across verification procedures due to patient head movements and/or differences in speaker placement by the audiologist. While previous literature may suggest a specific placement of the loudspeaker, these protocols may not be strictly followed clinically. Further, most audiologists believe that the equalization tone presented prior to measurements will account for variability in the speaker location. In order to understand the impact of presentation azimuth on verification measures, it is important to understand the impact of changing the position of the speaker on the measurements of audibility and confirmation of dynamic range. The purpose of this study is to evaluate the impact of presentation azimuth on verification measures for HAs and CIs in the clinical setting.

Verification is an essential component of both HA and CI programming protocols. Verification allows the audiologist to ensure that the programming of a hearing device is set appropriately so that specified targets are obtained based on one’s specific hearing loss [4] and ensures that there is appropriate gain and output of the hearing device. Although HA and CI differ in mechanics and verification protocols, they are the same in that stimuli are presented in the sound field during verification (although, the sound field measures performed with a CI could arguably be called validation measures). While it is common to complete these measurements at the ear-level of the patient, some of these measurements may also be completed in the test box. Simulated real ear measurements as well as feature verification of the devices can be tested using the available test box measurements. Speech perception testing conducted to evaluate performance with a CI reflects validation of the user’s performance with the device. These validation speech measurements may also be completed with individuals who only utilize a HA.

Verification of HA fittings is one of the essential stages of the HA fitting process [5]. The focus throughout each of the stages is to ensure that sounds are audible, yet not uncomfortably loud, to individuals with HAs, which is why it is important to ensure that the devices are fit and verified appropriately. Currently, the only method available to accurately record and measure a signal is with a realear verification system. This equipment conducts measurements at the ear level (or within the ear canal), referred to as “on-ear measurements” or conducts the measurements within the test box using a person’s ear acoustics as a reference. On-ear measures utilizing probe microphone technology is an approach to ensure accurate measurements of an acoustic signal within an individual’s unique ear with the use of specialized signals and equipment [5]. This safeguards that the HA settings are suitable for the individual and verifies audibility while ensuring that uncomfortable loudness levels are not an issue for the HA user. Although there are many different real-ear verification systems, all systems utilize the same general principles and procedures. When conducting on-ear measurements manufacturers of real-ear verification equipment will recommend that the individual be positioned at least 18-36 inches from the loudspeaker [5-8]. The manuals for the verification equipment are void of specifics regarding patient location or generally state that the patient should be at 0-degrees azimuth from the speaker. Anecdotally, it is rare that the patient maintains the exact position throughout the entire verification session. The method by which the most commonly used fitting formulae (DSL and NAL) are created used very specific protocols for verification; with respect to the current topic, these fitting formulae were created assuming a speaker at 0 degrees azimuth. It should be noted that these recommendations are for typical bilateral HA fittings and do not apply to fitting of remote devices such as a cros/bicros devices. While 0-degrees azimuth is the recommended speaker orientation for verification procedures in these situations, these protocols are not always followed in typical clinical practice; the purpose of this study was to determine if deviations from these strict protocols would change the outcomes of the on-ear measurements. [9] suggested that while the 45-degree azimuth was the most reliable, maintaining this position is difficult as the speaker would need to be moved for bilateral measurements and patients often move to look at the computer output. Therefore, the 0-degrees azimuth was a preferred placement, this is consistent with clinically, these recommendations are not always followed.

When measuring audibility and dynamic range, guidelines are available from professional organizations [5,11] as well as the manufacturers of verification systems [6-9]. These guidelines suggest the measurement of real-ear-to-coupler-difference (RECD) for the accurate conversion of the measured dB HL thresholds to the necessary dB SPL thresholds. Once this is completed, the test signal is played for measurements. The real-ear-unaided-response (REUR) can be conducted with only the probe-tube in the ear. This is to capture the patient’s natural ear canal resonance. This measurement it to be compared to the real-ear-occluded-response (REOR) which is measured with the probe-tube and the HA in the ear with the HA muted. For those HAs with a lot of venting, this response may be compared to determine what frequencies of the person’s natural resonance are impacted by the insertion of the HA. This is particularly important for BTE HAs coupled to a thin tube with an open dome fit on a person with normal to near-normal low frequency thresholds. This person may realize a decrease in hearing ability if the HA is occluding the ear canal. Finally, the real-earaided- response (REAR) is measured, which is the probe-tube and HA in the ear with the HA active. This is the measurement used to determine audibility and dynamic range. These four measurements make up a typical quality HA verification protocol.

There are many signals that can be used for verification, including speech, speech-like signals, pure tones, and different noise composites. Each of these signals has a specific role in verification, however, for verifying audibility and dynamic range of speech through a HA, a speech-based signal, such as the ISTS, is typically preferred clinically [12]. These signals are played out of a speaker at a specified level and are measured within the ear as REUR, REOR and REAR. When conducting probe microphone measurements clinically, an equalization tone, which is a pink noise, is played prior to each speech passage for soft, moderate and loud speech and MPO swept pre-tone measurement. The equalization tone is played to ensure that any changes found within the measurements are due to performance of the HA and not due to changes with the input level or the environment. Across the most commonly used verification manufacturer devices, a digital filter is then applied at the reference microphone to correct for changes in the acoustic signal caused by ambient noise in the room and other acoustic considerations to provide a flat frequency response at the reference microphone [7-9,12]. This signal is necessary to ensure that the output of the speaker is a flat response which ensures an accurate measurement of the HA function. Clinically, when conducting these measurements on a device with very little venting, the equalization tone is played prior to each presentation. The purpose of the equalization tone would be to eliminate any variability created by movements or other sounds within the environment from interfering with the tests of audibility. Additionally, it would calibrate the system from the location at which the speaker stands. While this is the general principle, several problems exist. This equalization tone is to be run prior to each measurement of audibility and loudness, with one large exception, for those HA with a lot of venting, the equalization tone is to be played with the HA on the ear, but muted (this is also known as real-ear-occluded-response [REOR]). This modified equalization method is employed as the equalization tone would be picked up by the HA and amplified.

The amplification of the equalization tone provided by the HA could leak out to the reference microphone where the system will unintentionally think that the speaker is producing a signal that is too loud, thus decreasing the output level. This decrease in output level would cause an inaccurate measurement of audibility. To overcome this, the equalization pink noise is played with the device on the ear but muted. After this measurement is taken, the HA is turned on, and the patient is instructed to not move as the system will use only the single calibration tone for the minimum of three audibility measurements (soft, moderate and loud) and the MPO measurement. Results from [13] suggests this modified equalization method may not change the response of the HA across presentation azimuth. Specifically, they reported that if the person stays stationary, the equalization does not need to be run prior to each of the four measurements (soft, moderate, loud and MPO). One inherent problem of only running the calibration tone once is that the patient may move. This has been known to happen in the clinic. Manufactures of real-ear systems have long-documented the importance of conducting measurements repeatedly in the same position. The purpose of the equalization tone is to account for the speaker location and other external acoustic noises. However, there is little documentation as to if the actual position of the speaker would impact the measurement clinically if the equalization tone was run appropriately. Given that the equalization tone should be run prior to the measurements of audibility and dynamic range, differences between speaker locations should be accounted for within the software. The purpose of this study was to determine if changing the position would change the response that would be used to determine audibility of the device. When utilizing a speech signal, a pre-recorded processed speech stimulus, such as the commonly used carrot passage [14], can be used to provide a consistent sample to conduct evaluations. One way to characterize such passages is using the long-term average speech spectrum (LTASS). Calculation of LTASS is done by taking the average of a 1/3 octave spectrum bandwidths of a portion of the speech sample over a period of time (generally, approximately 10 seconds) [12]. On a real-ear verification system, a LTASS passage is available at various intensities [12] and used in prescription and evaluation of HA fittings [13].

As previously stated, there are various speech passages available for use during verification measures. One such passage, is the International Speech Test Signal (ISTS). The ISTS has been used to analyze the processing of speech by HAs because the signal contains the most relevant properties of natural speech, such as the average speech spectrum, modulation spectrum, the variation of the fundamental frequency with the appropriate harmonics, and the co-modulation of different frequency bands [11]. The ISTS consists of 500 ms segments recordings of six female talkers reading the same passage in six languages (spliced together to match the average female spectrum [15]. Because the ISTS is an amalgamation of multiple languages and speakers, it contains many characteristics of natural speech in real life conditions in a short passage [11], which is an important consideration in clinical audiology.

The goal of HAs is to increase the audibility of speech and other environmental sounds. While this is also a goal for CI programming, an additional goal is to improve discrimination or understanding of these sounds. In the clinical environment, on-ear measurements are conducted on individuals with CIs to evaluate speech understanding as opposed to on-ear measurements that are designed to evaluate audibility as are done for HAs. At the current time, the only available means for evaluating audibility through a CI is sound field threshold testing. There is not a means to evaluate the physical output from the CI speech processor as is possible through a real-ear verification system used with HAs. The Minimum Speech Test Battery [16] is the guideline used for CI performance assessment developed through a collaborative effort by the American Academy of Otolaryngology- Head and Neck Surgery and the CI manufacturers [16]. The MSTB outlines the recommended testing procedures for word and sentence recognition testing. The word recognition assessment is the pre-recorded Consonant-Nucleus-Consonant (CNC) [17]. The sentence recognition assessments include the Bamford- Kowal-Bench Speech-in-Noise (BKB-SIN) (Etymotic Research, 2005) and AzBio Sentence test [18] tests. These assessments are recommended for both preoperative and postoperative evaluations [16]. The CNC word test is administered in quiet. The BKB-SIN is administered with an increasing signal-to-noise ratio (SNR) at each subsequent sentence. The recommended testing procedure for the AzBio test is for it to be administered in both quiet and with a fixed SNR, where the SNR is determined by the speech understanding abilities of the listener [16].

These measures of CI performance occur in a sound field environment, specifically in an audiologic test booth. When the individual with a CI is tested, they are assessed with their CI device turned on sitting at a distance of one meter (as measured from the center of the person’s head to the loudspeaker) and oriented at a 0-degree azimuth in relation to the speaker [16]. This is the same orientation described in the HA verification section. Although pure-tone threshold testing procedures are not discussed in the MSTB, pure-tone threshold testing could be conducted as another measure of detectability of soft frequency-specific stimuli.

Given that the measures discussed for both HAs and CIs are completed in a sound field environment, factors related to sound transmission through a sound field are of interest. For example, an individual’s orientation in relation to the loud speaker during sound field testing impacts the path a stimulus has to take before it reaches an individual’s ears or microphones of his or her hearing devices. An individual’s head, its orientation to the sound source, and the frequency of the signal can affect how the person perceives the sound. The head-related transfer function (HRTF) is the result of the filtering effects of the head, ears, and body on the signal. The filtering properties of the HRTF provide for the perception of sound in a three-dimensional space and are used in localization. These cues are based on the acoustic interaction with the head and ears [19]. Due to the HRTF and other factors related to sound field presentation, the presentation azimuth is of concern when performing on-ear measurement, whether these be pure-tone threshold testing, on-ear HA verification measures, or speech perception testing for users of CIs. This concern, more specifically the potential impact of head movements on HA verification measures, has been evaluated in previous publications [3,20]. For example, [20] evaluated thresholds for pure-tone stimuli at various azimuths in young, normal hearing adults in a free-field environment with an occluded ear. Thresholds were obtained at 0-degrees azimuth and at azimuth intervals of 30-degrees where the occluded ear was between 270- and 0-degrees and the unoccluded ear was between 0- and 90-degrees. Results revealed that thresholds improved as the presentation azimuth moved toward 90-degrees, compared to thresholds obtained when the stimuli were presented at 0-degrees azimuth. The improvement in threshold was greatest at 30-degrees and 60-degrees azimuth of the unoccluded ear. Similar results were found for speech recognition testing (SRT). Although the participants were not using hearing devices, these results indicate that the impact of changing presentation azimuth may be clinically meaningful.

A similar improvement in threshold has been noted for children and adults who use CIs [21] evaluated the sentence recognition ability in the presence of background noise of 19 adult and pediatric CI recipients. Results revealed the speech recognition thresholds improved by up to 15 dB as the presentation azimuth moved toward the CI speech processor. Other investigators have evaluated the impact of presentation azimuth on verification measures in users of Has. While results from these studies consistently demonstrate a change to the output of the verification measure as a function of presentation azimuth, these studies vary in the azimuths and verification tools (e.g. REUR vs insertion gain) utilized, and the specific question related to presentation azimuth being asked. For example, [20] found that small variations in the presentation azimuth centered on 0 degrees lead to small changes in insertion gain. Ringdahl & Leijon utilized these small variations in presentation azimuth to represent small variations in speaker placement that may result from head movement or slight inconsistencies in the speaker location.

Speaker distance has also been shown to impact on-ear measures. Stone and Moore (2004) evaluated the impact of speaker distance on REUR, which was measured without a hearing device in place, at two presentation azimuths (0 and 45 degrees). Results revealed less variability in REUR, with changing distance at 0 degrees than at 45 degrees and great variability when speaker location changed in the vertical plane than in the horizontal plane. One potential limitation of the clinical application of Stone and Moore (2004) is the use of REUR. REUR is a measure that is performed without the hearing instrument in place [22]. As such, the impact of the hearing instrument itself on presentation azimuth cannot be stated), performed on-ear verification measures (REUR, REAR, REIR) for conditions with a behind-the-ear (BTE) and in-theear (ITE) hearing aid in place on a KEMAR. A primary question of [3] was the role of reference microphone location on these on-ear measures. Results from [3] demonstrated that a 90-degree azimuth location was most susceptible to changes in reference microphone location. The impact of presentation azimuth is also of concern with CI speech processors due to the multiple potential microphone locations available. For example, CI speech processors that are worn on the ear may have a microphone location at the top of the speech processor casing, a microphone location slightly to the back of the top of the speech processor casing, or at the entrance of the pinna. Conversely, CI speech processors that are worn on the head or the body would have a microphone location on the head. These various microphone locations may potentially result in different input signals to the speech processor signal processing, thus impacting the ultimate signal the user receives. Each of these works has furthered the knowledge of the role speaker azimuth plays in on-ear verification, however, a replication with procedures directly mimicking the clinical environment and including CI speech processors would provide additional information regarding clinical practice. More specifically, although there are recommendations for speaker placement during on-ear verification, observation of behaviors of audiologists in the clinical setting suggest variability remains in the actual speaker placement during on-ear verification. Some audiologists have been observed placing the speaker at 0-degrees while others have been observed placing the speaker at 45- or 90-degrees azimuth. This raises questions for interpretation of on-ear verification of audiology and dynamic range across, potentially, different speaker locations.

The purpose of the current study was to evaluate the impact of different presentation azimuths on Real Ear Aided Response (REAR) for individuals utilizing an open-fit BTE HA and individuals utilizing a CI speech processor. Or more specifically, does the impact of presentation azimuth differ based upon microphone location and physical structure of HA and CI speech processors. REAR was selected for use in this study because although insertion gain and REAR are similar measures, REAR is more related to audibility, which is the currently used approach to verification techniques for HAs. Further, the presence of the physical hearing aid and cochlear implant device during the on-ear measures furthers the clinical application of previous studies evaluating presentation azimuth and is necessary for guiding clinical practice patterns.

Data collection occurred at the University of South Dakota Scottish Rite Speech and Hearing Clinic in Vermillion, South Dakota. Informed consent was obtained from each participant prior to participation in the study. Ethical approval for this study was obtained through the Institutional Review Board of the University of South Dakota. An a-priori power analysis was conducted using a moderate effect size rather than an effect size based upon previous publications due to the utilization of simulated persons in the most closely related previous work [3]. This power analysis indicated that 19 participants were needed for a within-person comparison study. Twenty (n = 20) adult participants were included in group 1. Five (n = 5) bimodal listeners were enrolled in group 2, totaling 25 (n=25) participants for the study. Participants ranged in age from 23 to 83 years of age and used oral language (English) as their primary means of communication. Participants were recruited via flyers posted on the USD campus and through word of mouth. All participants completed the study in its entirety. Listeners in group 1 ranged in age from 23 years to 47 years old (average 28.4 years). There were 15 females and five males within group 1. Two participants in group 1 reported binaural hearing loss and were previous HA users, but were not actively wearing HAs at the time of study completion. All other participants in group 1 reported hearing within normal limits and did not use a HA or a CI outside of the testing session, previously or at the time of participation. Listeners in group 2 were bimodal users and ranged in age from 70 years to 83 years old (average 76 years). Bimodal users were included in group 2 so that both HA and CI devices were available for on-ear measures within an individual. All bimodal listeners presented with a severe or greater sensorineural hearing loss above 1000 Hz in the HA ear. Thresholds at 250 and 500 Hz ranged from 50 dB HL to 75 dB HL. All bimodal users used their personal hearing devices (CI and HA) for the study. There was one female and four males within group 2. The average length of HA use in group 2 was 24.6 years. The average length of cochlear implant use was approximately 2.5 years. All of the participants reported that they used oral language (English) as their primary means of communication. Listeners in group 2 reported that they wore their personal HA and CI during all waking hours.

All participants had real-ear unaided response (REUR), realear occluded response (REOR) and real-ear to coupler difference (RECD) measured to determine compare participants’ ear canal acoustics to preexisting data. The comparative measurement between REUR (only the probe in the ear) and REOR (probe in ear with hearing aid on but muted) are necessary to measure the acoustic changes to the ear canal caused by the hearing aid [22].

Group 1 participants were tested using one of the two behindthe- ear hearing aids (HA) from two manufacturers, described as hearing aid one (HA 1) and hearing aid two (HA 2). The demonstration HAs been “premium” level BTE HAs to provide amplification through as many speech frequencies as possible; devices were coupled to an appropriately fit length thin tube with a medium open dome. All automatic features were disabled, and the HA was programmed in a fixed omnidirectional setting. This was to ensure that any differences in the measurements taken at the various azimuths could be attributed to the azimuth changes as opposed to the settings of the HA. Hearing aids were pre-programmed with a mild to moderate hearing loss using the manufacturer’s first-fit program to ensure that the aid was providing measurable gain, which could be compared for the purposes of the study. In addition to HA devices, measurements were obtained using three CI models. Participants in group 1 were limited to the use of two models of on-ear CI speech processors (on-ear CI 1 and on-ear CI 2) and one speech processer worn elsewhere on the body with the microphone located on the headpiece (head CI). Testing was conducted using on-ear CI 1, on-ear CI 2, and the head CI with typical placement of the speech processor (e.g. on the ear for on-ear CI 1 and onear CI 2) and headpiece, where the headpiece was held in place with double-sided toupee tape. The speech processors were not powered on during testing because they were representing the mass and dimensions of the presence of the speech processor device during measurements. Photographic representation of setup for each CI model is provided in Figure 1. Given the set-up for the HA and CI conditions, the on-ear measures conducted for the HA and CI conditions differed in the stage of signal processing at which measurements were obtained. Specifically, HA conditions were measured at the output of the HA processing (output end of tubing), CI conditions were measured at the input to the speech processor signal processing (microphone location).

Figure 1: (a) The probe microphone is placed next to the processor microphone on the on-ear CI 1.

(b) The probe microphone is placed next to the t-microphone on the on-ear CI 2

(c) The probe microphone is placed immediately below the microphone port on the headpiece of the head CI. The CI speech processor is not shown in the picture; however, it is clipped to the shoulder.

These measurement locations were selected based upon what is clinically available at the current time. Therefore, the measurement location for HA conditions was consistent with typical set-up used during clinical verification measures. The preprocessing measurement location utilized for the CI conditions was utilized because it is not possible to conduct these measures at the post-processing stage, clinically. Further, the measurement location for the HA and CI conditions were selected to ensure that the role of the head, pinna, and ear canal would be represented in their respective role in the passage of the signal. For HA conditions, the head, pinna, and ear canal all play a role in the signal eventually reaching the user, whereas, for CI conditions, the head and pinna play a role in the signal eventually reaching the user. Given that the purpose of the study was to evaluate differences across microphone location and physical structure of HA and CI speech processors, these measurement locations, although different in the stage of processing within the device, were representative of the obstacles the signal would encounter when traveling through the sound field environment. Participants were tested individually within a sound treated room. Noise levels in the sound treated room were in accordance with American National Standards Institute Methods of Measurement of Real-Ear Performance Characteristics of Hearing Aids (1997). For the purposes of this study, the equalization tone was played with the device in the participant’s ear with the aid muted. The aid was then turned on and study measurements were conducted.

Figure 2: Schematic representation of equipment and booth setup for testing. The three shaded bars represent the orientation of the listener’s head relative to the speaker for the 3 test azimuths.

The participants were seated at the respective presentation azimuth during testing and were instructed to remain looking forward while maintaining an upright seated position while being measured at three presentation azimuths, 0-degrees, 45-degrees, and 90-degrees. The presentation azimuths were established using protractors and laser-levels to ensure the proper azimuth. Each azimuth was marked with four pieces of tape of the same color on the floor that aligned with a four-legged chair, which was the chair the participants used during testing. Each azimuth had its own tape color. In sum, there were five different colors of tape, which designated all testing azimuths to account for measurements of the left and right ear devices for participants in groups 1 and 2 (0-degrees shared the same color for both groups). The fourlegged chair was placed at the first testing azimuth and after a test condition was concluded, the chair was rotated to the next azimuth position so that each leg of the chair was on the proper tape color. This was to ensure proper and consistent azimuth placement. Figure 2 demonstrates the layout of the sound booth and location of equipment during testing.

Real-ear to coupler differences were collected using the Audio scan Verifit Version 1 system with software version 3.12. The Verifit test box reference microphone was calibrated prior to testing. For RECD measurements, the RECD transducer was connected to the 2cc coupler and the coupler was measured. After the 2cc coupler measurement, the Verifit was set to conduct on-ear RECD measures on the respective ear using a foam insert. The probe microphone placement was re-verified via otoscopic examination. Additionally, for groups 1 and 2, REORs and REURs were recorded in the ear with the HA. The signal was played into the open ear to record the REUR and again while wearing the muted hearing aid to record the REOR. The purpose of these measurements was to determine the loss of sound due to the partial occlusion of the ear because of placement of the HA in the ear. The International Speech Test Signal (ISTS) stimulus was presented through the sound field via a speaker connected to an Interacoustics AC-40 audiometer. The ISTS and corresponding calibration tone were 16-bit Waveform Audio File Format files downloaded from the files were presented via an iPod Touch® (4th generation), which was connected to the Interacoustics AC-40 audiometer via standard stereo audio cable and cable extension. An iPod was controlled by the researcher who was seated in the sound treated booth behind the participant. The ISTS moderate intensity spectrum sentence and calibration tone was presented through the right-facing loud speaker in the test room. Prior to testing each day, the calibration tone was played through the set-up just described and the intensity was measured using a Quest Model 1900 sound level meter (SLM) with an A-weighted scale, with the SLM electret microphone located a position that corresponded with the center of the listener’s head in each condition. The Interacoustics AC-40 audiometer/iPod was then adjusted so that the SLM reading indicated 60 dB SPL.

While the ISTS stimulus was being presented, the stimulus level was measured in the participant’s ear. This measure was obtained for each presentation azimuth and each device condition. The probe microphone was placed into the participant’s left ear. Previous research suggests that for adults there was no significant difference in RECD measurements between the right and the left ear (Munro & Howlin, 2010). The left BTE HA programmed as described above, was placed into the participant’s ear. The ISTS signal was played through the speaker and the sound pressure level was recorded in the participant’s ear using the “speech live” setting on the Audioscan Verifit. This setting on the Verifit1 is the setting where the system records the response from an external source of input. In this case, the system was recording the ISTS signal outputted from the speaker as described above. Once all three azimuth conditions were completed using one of the two models of HAs, the same testing was completed using the three CIs. For this testing, the probe microphone was affixed to the CI near the active microphone on the speech processor for the on-ear CIs (on-ear CI 1 and on-ear CI 2) and on the microphone located on the headpiece that was placed on the head (head CI).

All odd number participants utilized HA 1 and all even number participants utilized the HA 2. Each participant used three demonstration CI speech processors, two on-ear processors (onear CI 1 and on-ear CI 2) and one speech processor worn at the level of the head (head CI). The order of device for each series of testing was randomized. To account for order effects, participant one began testing at 0-degrees and subsequently, tested at 45- and 90-degrees. Participant two began testing at 45-degrees and subsequently tested at 90- and 0-degrees. Participant three began testing at 90-degrees, progressed to 0-degrees, and finally 45-degrees. This pattern continued through the remainder of the participants.

In addition to on-ear measures described, aided sound field behavioral threshold testing was completed for individuals in Group 2 at each of the three azimuths with the CI only. To limit response from the contralateral ear, a masking noise of 60 dB HL was presented via an ER3A insert earphone to the contralateral ear. Behavioral pure-tone thresholds were measured in the sound field at 500, 1000, 2000, 3000, 4000, and 6000 Hz utilizing frequencymodulated tones. Threshold was defined according to ASHA audiometric testing guidelines [23] utilizing a Modified Hughson- Westlake procedure [24]. In accordance with the Minimum Speech Test Battery (2011), assessments within the sound field environment was conducted at a distance of one meter as measured from the center of the participant’s head. The testing order for participants with bimodal devices was similar to Group 1. The device (HA or CI) that was used to start the testing was completed in a random order. As such, the on-ear measures and pure-tone aided thresholds testing were randomized. The order of azimuthal testing occurred as described for group 1. Throughout the study, each participant in group 1 completed three iterations of the ISTS sample (one for each azimuth tested) with three different hearing devices (one HA and three different CI processors) for a total of twelve iterations. Participation in group 1 required approximately 30 minutes. Group 2 bimodal users completed three iterations of the ISTS sample with their CI and three iterations of the ISTS sample with their HA (total of six iterations). Bimodal users also completed sound field behavioral testing at three azimuths. Participation in group 2 required approximately 45 minutes.

When comparing the mean REURs to REORs, results indicated that responses for the occluded measurements were essentially the same as the unoccluded responses. Overall, results from REUR, REOR, and RECD demonstrate that the participants in this study exhibit typical ear canal acoustics.

A repeated measures ANOVA was conducted to determine if there was a difference between azimuth and frequency for the two BTE HA, a separate repeated measures ANOVA was conducted for the CI speech processors. A significant difference was noted for both aids (HA1: p=.001 F= 48.96; HA2: p=.001 F = 213.7). There were also differences noted for all three CI speech processors (onear CI1: p=.001 F= 150.65; on-ear CI2: p=.001 F=134. 68; head CI: p=.001 F= 13.05). A post hoc analysis was conducted to determine at which frequencies the differences occurred between azimuths. These are denoted with an asterisk on Table 1. Change in dB SPL relative to 0-degrees averaged across participants is provided in Table 1. Overall, from 250 – 6000 Hz, dB SPL was higher at 45- and 90-degrees azimuth than at 0-degrees azimuth for the on-ear devices (on-ear CI 1, on-ear CI 2, and both HAs). For the head CI, dB SPL varied little at each of the azimuths with most frequencies showing a 1 dB change from 0-degrees. Across all devices, the greatest change in dB SPL across azimuths was seen at 750 Hz. Figure 3 shows the mean dB SPL frequency response as a function of presentation azimuth for HA and CI devices.

This figure illustrated that generally, a 45-degrees presentation azimuth yielded a higher dB SPL than a 0-degrees azimuth, and a 90-degrees presentation azimuth resulted in a slightly higher dB SPL than a 45-degrees azimuth. This increase in intensity was most apparent between 500 and 2000 Hz, and again, the largest increase in dB SPL was consistent with the aforementioned presentation azimuth pattern. Across all frequencies for both CIs and HAs, there was a mean increase of 2.26 dB SPL and 2.41 dB SPL for the 45- and 90-degrees presentation azimuths when compared to 0-degrees azimuth, respectively. For both CIs and HAs, between 500 Hz and 2000 Hz there was a mean increase of 2.76 dB SPL and 3.31 dB SPL for the 45-degrees and 90-degrees azimuths when compared to 0-degrees azimuth, respectively. Additionally, as shown in Figure 3, for the three models of CIs, at each presentation azimuth, there was a peak response in intensity at 0.5k Hz. Table 1 after this, as the frequency increased there was a corresponding decrease in dB SPL. The mean dB SPL across azimuths at 500 Hz for the CI was 16.14 dB greater than the mean dB SPL across azimuths at 6000 Hz. HA measurements indicated the peak response for the HA occurred at 3k Hz across all presentation azimuths, which corresponded with the typical resonance frequency of the ear canal (Wiener & Ross, 1946). The lowest intensities were measured at 6000 Hz across all presentation azimuths. However, the mean decrease from the peak of 3k Hz to the least intense response at 6k Hz was 16.85 dB SPL. This decrease from the most intense to least intense response was similar to that of the CI, which was 16.14 db.

*Indicates values that were significantly different (p<0.05) than 0-degrees azimuth.

Figure 3: Group 1 data for each hearing instrument. The dB SPL response is plotted as a function of presentation azimuth. The y-axis represents the mean dB SPL response and the x-axis represents the frequency of stimulus. The top row represents the CIs and the bottom row represents the HAs.

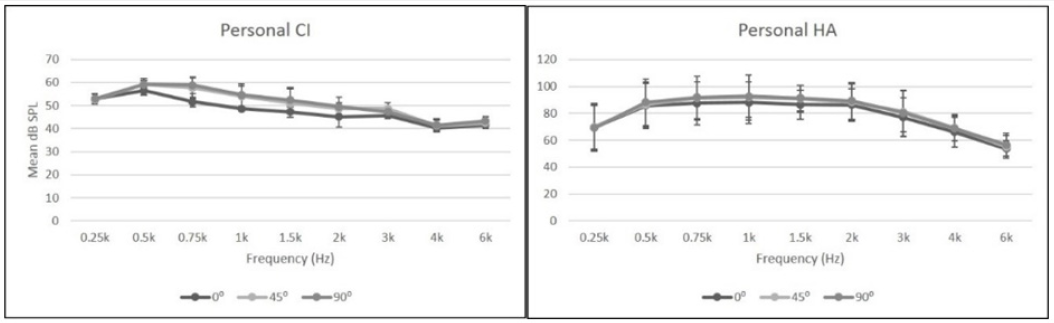

The frequency response of bimodal listeners with their personal CI and HA at the three presentation azimuths is shown in Figure 4. Similar to group 1, the results from group 2 participants also showed a peak response for the hearing devices followed by a reduction in intensity as the frequency increased across all presentation azimuths. For the CI condition, there was a similar decrease in intensity from the peak response at 500 Hz as the frequency increased. When comparing the difference of the mean peak of the CI responses across all azimuths at 500 Hz to the responses at 6000 Hz across all azimuths, there was a decrease of 15.8 dB SPL. As stated previously, the group 1 CI difference between 500 to 6000 Hz was 16.14 db. The difference between the two groups was essentially the same at 0.34 dB SPL.

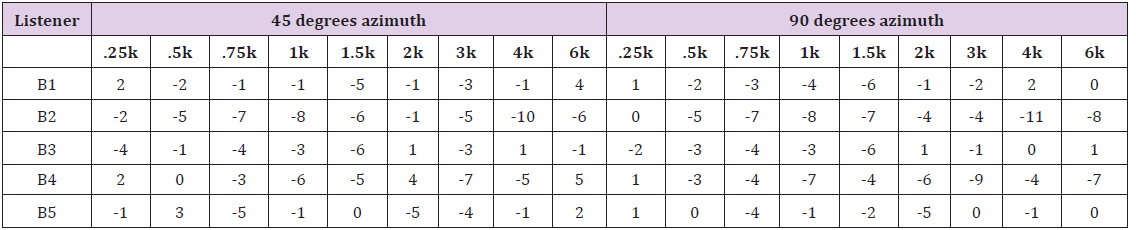

Tables 2 & 3 provides the change in dB SPL relative to 0-degrees azimuth (e.g. dB SPL at 0-degrees minus dB SPL at 45- degrees) for participants in group 2 for their personal CI and personal HA, respectively. For group 2, the greatest increase in dB SPL between the different azimuths generally occurred in the frequency range of 500 and 3000 Hz for both CIs and HAs. Across all frequencies for CIs and HAs, there was a mean increase of 1.58 dB SPL and 3.24 dB SPL for the 45- and 90-degrees presentation azimuths when compared to 0-degrees azimuth, respectively. However, for both CIs and HAs, between 500 Hz and 3000 Hz, there was a greater mean increase of 3.65 dB SPL and 4.25 dB SPL for the 45- and 90-degrees azimuths when compared to 0-degrees azimuth, respectively. HA responses in dB SPL decreased as the frequency increased (Figure 4). The frequency response appears to peak at 1000 Hz and becomes less intense as the frequency increases above 1500 Hz. Across presentation azimuths, the mean dB SPL was 35.67 dB less from the peak response of 1k Hz to the response at 6000 Hz. The HA difference in group 1 was 16.85 dB from its peak at 3000 Hz, to 6000 Hz, which was less than half of the HA difference in response in group 2. This difference between the two groups could be due to several reasons. First, the HAs in group 2 (bimodal participants), were previously programmed for the participants’ hearing loss. This provided greater gain than the HAs used by the participants in group 1, which were programmed for a mild loss. Additionally, participants in group 2 utilized their custom earmold for their hearing aid while participants in group 1 utilized an open fit dome. The custom earmolds used by individuals in group 2 could retain more acoustic energy than the open fit dome product. Finally, the small sample size limits expansion of findings beyond the current study (Figure 5).

Figure 4: Group 2 data for personal hearing instrument. The dB SPL response is plotted as a function of presentation azimuth. The y-axis represents the mean dB SPL response and the x-axis represents the frequency of stimulus. The left column represents the CIs and the right column represents the HAs.

Figure 5: Group 2 aided behavioral thresholds at each presentation azimuth. The y-axis represents the threshold in dB HL and the x-axis represents the frequency of the stimulus.

Table 3: Change in dB SPL relative to 0 degrees azimuth using personal HA for listeners in group 2.`

Aided behavioral thresholds were obtained at each of the three azimuths for each of the five participants in group 2. Individual results for group 2 (bimodal listeners) for the aided thresholds plotted as a function of azimuth are shown in Figure 5. Mean thresholds at the three azimuths for all of the five participants ranged from 19 to 45 dB HL across the frequencies. Across each frequency at each azimuth, the mean thresholds generally did not vary more than 5 dB across each presentation azimuth. The mean difference was 4.25 dB HL between the lowest (better) and highest (worse) thresholds measured across the presentation azimuths for all of the frequencies. The thresholds obtained at 45-degrees and 90-degrees were always lower (better) than the 0-degree presentation azimuth. The average improvements in thresholds in comparison to 0-degrees were 3.33 dB HL and 3.25 dB HL for 45- and 90-degrees, respectively. This mirrored the results by Dirks et al. (1972) when behavioral thresholds of individuals with typical hearing were assessed. In that study, there was an average improvement of 3-4 dB HL as the azimuth shifted toward the unconcluded ear, with the greatest improvement in threshold being obtained between 30- to 60-degrees.

An effect of presentation azimuth on the on-ear measurements during verification across the hearing devices was observed. The effect was greater (increased REAR intensity) at 45- and 90-degrees when compared to 0-degrees. There was an improvement (lower thresholds) in behavioral thresholds at 45- and 90-degrees for each frequency compared to 0-degrees presentation azimuth. Comparisons across CI and HA conditions are limited due to the potential confound of signal processing within the device (HA conditions were measured post-processing; CI conditions were measured pre-processing). Despite this, general trends across presentation azimuths between HA and CI withstand. The pattern of change noted (e.g. increase in dB SPL at 90-degrees in comparison to 0-degrees azimuth) is not likely related to processing within the HA since the same processing (within the HA) would be applied at each azimuth condition. In group 1, the frequency response of the speech signal was affected by the change in the presentation azimuth. There was approximately a 2.25 and 2.5 dB SPL increase at 45- and 90-degrees presentation azimuths when compared to 0-degrees azimuth. The results for group 2 (bimodal listeners) were similar to group 1. Group 2 behavioral thresholds showed a lower threshold (better) at 45-degrees and 90-degrees when compared to the 0-degrees presentation azimuth. For changes in aided behavioral threshold results to be clinically significant, differences between measures would need to exceed 10-15dB (Stuart 1991). As such, the small threshold change observed in this study is not likely clinically meaningful.

In regard to microphone location with the CI, a greater effect of presentation azimuth was observed for the on-ear CIs than for the head CI. When evaluating the frequency responses for the ear-level CI, the intensity was greatest at 500 Hz, and decreased in intensity as the frequency increased. This decrease in intensity may have happened due to the location of the microphones on the speech processor. The microphones on the speech processors sit on top of and slightly behind the pinna when worn by the user, possibly creating a barrier between it and the sound source. It is possible that the microphones on the speech processor sit at such an angle that the sound waves traveling through the environment may encounter an obstacle, resulting in a possible acoustic shadow, which can impact a frequency response. The specific frequencies falling in the acoustic shadow would be related to the dimensions of the objects creating the shadow [25-27]. The location of the microphone on the ear-level CI, situated behind the ear, could have resulted in an acoustic shadow, reducing the intensity of the sounds at the microphone. The greatest degree of variability in the aided behavioral threshold results from the participants in group 2 was observed at 6000 Hz. The greater variability in aided threshold responses at 6000 Hz may be due to similar factors to those presented previously regarding the head shadow effect. These differences may also be due to the small sample size of this group. An acoustic shadow may have occurred when the presentation azimuth was changed from 0- to 45- and 90-degrees across the hearing instruments. However, as the location of the microphone on each of the hearing devices was in a position with a more direct access to the signal (i.e. 45-degrees and 90-degrees), frequencies were recorded at higher intensities, specifically between 500 and 2000 Hz. Overall, results from the current work are consistent with previous findings, such as [3], although the frequencies most impacted by changing presentation azimuth differed. For example, [3] demonstrated a greater change in dB SLP at frequencies above 2k Hz for changes in presentation azimuth. The current study demonstrated the greatest change from a 0-degree azimuth to occur at 0.75Hz for all hearing instruments (HA and CI). The origin of this difference between studies is not clear.

Verification and validation measures are essential in ensuring that an instrument is providing sufficient gain for the user. Currently, 0-degrees presentation azimuth is recommended for verification and validation. While results from the current study do not suggest that a change in practice is necessary, consideration should be given to potential acoustic shadows created by the device itself. One specific clinical application is that presentation azimuth during testing should be documented to ensure replication across test times as changes to presentation azimuth could result in clinically meaningful changes in verification measures that may lead a clinician to modify device fit. These modifications may or may not be warranted as the deviation from fitting target may be related to presentation azimuth during verification procedures rather than to device processing.

A replication of this study including more participants should be conducted; particularly for the cochlear implant devices. Additionally, measurements at the level of the entrance of the external auditory meatus should be taken at the three azimuths in the HA condition to determine if the presentation azimuth has an effect on frequency responses at the pre-processing stage. When conducting behavioral thresholds at various azimuths, rather than utilizing the modified Hughson-Westlake technique as used in this study, smaller dB steps (i.e. – two dB steps) could have been used for a more accurate measure of absolute threshold. Lastly, this study could be expanded to measure speech perception at various azimuths of individuals with hearing instruments and those with typical hearing. This may have more clinical relevance for real world performance and counseling based on speech perception testing.