Impact Factor : 0.548

- NLM ID: 101723284

- OCoLC: 999826537

- LCCN: 2017202541

Alpana Upadhyay*1 and Monika Vinish2

Received: February 28, 2018; Published: March 13, 2018

*Corresponding author: Alpana Upadhyay, Associate Professor & Head Department of MCA, Sunshine College, Gujarat Technological University, Gujarat, India

DOI: 10.26717/BJSTR.2018.03.000846

In current era of Information and Communication Technology (ICT), entire world has become vibrant and the capability these technologies have has dramatically changed human life and permeated all facets of modern life. From past many years neuroscience research has an outburst of opportunities for developing and designing brain based neuro-technologies Neuroscience research, now a day, has also been driven by such technological progression. Neuroscience is more centered on study of neural codes and computations using advance data analytics. Artificial neural network in machine learning, on the other hand has a propensity to disdain for designing codes, designing of circuits and dynamics to support optimization of brute force by means of consistent and simple preliminary architecture. This supple, adaptive and brain based neurotechnology incorporated with and capitalized on the capabilities of humans, is used to getting human brain-computer interaction better. BCI- Brain Computer Interfaces is the major front runner of this notion that has been fundamentally intended on the enhancement of the quality of life particularly pertaining to clinical population.

Keywords: Machine Learning; Computational Neuroscience; Brain-Computer interface; Artificial Neural Network; Deep Learning

Abbrevations: ICT: Information and Communication Technology; BCI: Brain Computer Interfaces; BBCI: Berlin Brain-Computer Interface

The supreme anonymity in science is the working of the brain. Brain-Computer interfaces is an interesting, active and highly interdisciplinary research topic and it is the interface between medicine, psychology, machine learning and signal processing, rehabilitation engineering, man-machine interaction [1,2]. This is the technology which facilitates human beings to triumph over a bad day by amending the environment you are living in to achieving preferred brain state, helps the doctor to recognize brain based disorder / diseases before it obstructs with life and levy neural activities. This technology helps doctors converse better with augmented interest and clarity before behavioral symptoms start appearing. From the perspective of man-machine interaction research, the communication channel from a healthy human's brain to a computer has not yet been subject to intensive exploration however it has potential to speed up reaction time or to supply a better understanding of a human operator's mental states [3]. In this reverence, BBCI-Berlin Brain-Computer Interface practices the approach of imposing the main load of the learning task on the Learning Machine that has the latent to adapt specific tasks and changes the environment with suitable machine learning and signal processing algorithms are used [4,5]. This technique has short learning time that involves the learning of individual brain states to be distinguished [6-12]. This paper discusses various machine learning techniques for BCI-Brain-Computer Interfaces with respect to high dimensional - small sample statistics scenario where the strength of modern machine learning lies.

Brain Computer Interface (BCI) technology is a powerful communication tool between users and systems. It does not require any external devices or muscle intervention to issue commands and complete the interaction [13]. The research community has initially developed BCIs with biomedical applications in mind, leading to the generation of assistive devices [14]. Brain computer interface (BCI) systems build a communication bridge between human brain and the external world eliminating the need for typical information delivery methods. They manage the sending of messages from human brains and decoding their silent thoughts. Thus they can help handicapped people to tell and write down their opinions and ideas via variety of methods such as in spelling applications [15], semantic categorization [16], or silent speech communication.

Machine learning methods for Brain Computer Interface pursues architecture of two functions

a) Training function and

b) Prediction function.

In supervised learning, model M is selected that can learn on the basis of either parametric or non parametric tests and can map on a set of input and output data. In unsupervised learning, machine learns the specific structure in the input space on the basis of given learning example. Figure 1 describes the architecture of machine learning methods for BCI.

Figure 1: Architecture of Machine Learning Methods for BCI.

zProbabilistic methods are developed in machine learning which discover structures and patterns available in data. These methods then are applied to scientific real-time problems. In Neuroinformaitcs or in Computational Neuroscience, the study of how the information is processed by brain and how it is analyzed and interpreted are carried out [7]. Neuroscientific experiments are conducted for that. The first thing to be done is to do the classification. In classification, based on external observations a rule is found. There are just two different classes available in the simplest case. Then the function is estimated from the given function class F, The test examples are understood to be generated from the similar probability distribution p(x, y). By minimizing the expected error (error is nothing but the risk), best function f can be obtained. When the underlying probability distribution is unknown, the risk cannot be minimized directly. Following is the formula of approximation of minimum risk.

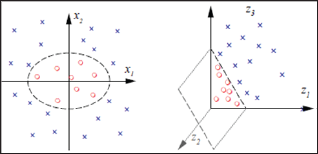

To give condition is possible on the learning machine that can make sure the empirical risk will congregate to the expected risk asymptotically. The large deviation is possible for small sample size and over fitting may take place. Figure 2 shows the illustration of over fitting.

Figure 2: Illustration of over Fitting.

Linear classifiers and methods are commonly used in Brain Computer Interfaces. Linear classification makes use of simple model. But the underlying probability distribution is not assumed properly and if outliers are present results obtained can go wrong and this happens in the analysis of Brain Computer Interfacing data. In Brain Computer Interfacing data k (k=1...... K) is measured first. Samples are Xk, where x is represents each sample point. To obtain linear hyper plane classifier, normal vector W is to be estimated and a threshold b is calculated by some optimization technique [8]. Following is the equation of obtaining hyper plain.

A linear classifier is defined by a hyper plane's normal vector w and an offset b, i.e. the decision boundary is {x |wTx+b = 0} (thick line). Each of the two half spaces defined by this hyper plane corresponds to one class, i.e. f (x) =sign (wTx+b). The margin of a linear classifier is the minimal distance of any training point to the hyper plane. In this case it is the distance between the dotted lines and the thick line [5]. There are various linear classification techniques like optimal linear classification.

In particular, kernel related learning follows nonlinear classification. However, overall classification is nonlinear by nature in input space, feature space and input space are nonlinearly related. By using nonlinear mapping, algorithms in feature spaces use following plan.

In this equation, data x1, x2,...... ,xn ∈ Rn. Rn is mapped with feature space F. So now the same algorithm is considered for F at the place of Rn.

In this classification, first regression on F is calculated. Input data is mapped with the hypotheses space or with RBF bumps in radial basis networks or in neural networks [9,10] or in boosting algorithm [11]. Nevertheless learning in F can be simple if linear classifiers (a low complexity) is used. In Figure 2 somewhat complicated non linear decision surface (two dimensional classifications) is used to separate the classes in a feature space. For the separation hyper plane is needed. In this instance two things are controlled

a. Learning machine's algorithmic complexity by using feature space and

b. Statistical complexity by using simple linear hyper plane Figure 3 represents two dimensional calssifiaction.

Figure 3: Two Dimensional Classification.

More than the past few years neuroscience research has been flared up much. The neuro-technologies endow computer (machine) to boost its predictive capabilities (machine learning) for cognitive and emotional conditions (states). On the other hand, the development of Brain Computer Interfaces technologies will have to triumph over numerous barriers. For instance, people's outstanding capability of adapting multifarious tasks and become accustomed with dynamic environment pull out difficulties in the interpretation of each individual's neural behavior at one instance of time. This problem may come up owing to the signal noise originated by overlapping of neural processes taken place from the recital of multiple simultaneous tasks and its environmental effects. By just reducing the training error, small error on unseen data can't be found. This may direct to over fitting of model yet for linear methods. One option to overcome this situation is to limit the complexity of function class as linear function which elucidates most of the data. Yet it needs removal of outlier by performing the step of outlier removal. In conclusion, model selection is very important. If one could select an ideal model then the learning algorithm's complexity is less imperative. The model selection should be done through employing best method whether it is done by using linear classifiers or non linear classifiers machine learning techniques.