Impact Factor : 0.548

- NLM ID: 101723284

- OCoLC: 999826537

- LCCN: 2017202541

Neelam Doshi*1, Carmel Tepper2 and Robert Gordon Wright3

Received: October 02, 2017; Published: October 12, 2017

Corresponding author: Neelam Doshi, Pathology, Faculty of Health Sciences and Medicine Bond University, Robina Gold Coast, 4226, Australia

DOI: 10.26717/BJSTR.2017.01.000435

Introduction: The Bond Medical Program delivers pathology in the preclinical years through interactive learning. Assessment for learning demandsfit-for-purpose assessmentthat aligns with the curriculum. In Year 2 of the medical curriculum, clinical pathology is assessed through a series of written and an integrated practical assessment (IPA) examination. The IPA is a practical examination held in a laboratory which permits the use of multi-media. The traditional written paper examines the theoretical aspect of pathology while the IPA assesses the observational skill and three dimensional application of pathophysiology to disease processes.

Objective: To determine whether a difference exists in student performance on pathology questions between the IPA and a written examination.

Methods: Year 2 undergraduate medical students write a 50-station IPA, followed by a 50-question written paper. A comparison of performance between the written assessment and the IPA is undertaken and correlated using Pearson correlation coefficient.

Result: A positive Pearson’s correlation coefficient of percentage scores (r=0.68, significant at > 0.01) between the written and IPA suggests a strong association between the two assessment methods.

Conclusion: Students’ scores in the IPA and the written assessment correlate well which suggest either could be used to predict students’ performance in pathology. The IPA enables students to connect the basic sciences with clinical sciences, thus aligning our learner centred pathology curriculum with the assessment tools.

Keywords: Assessment; Clinico-pathological correlation; Integrated; Pathology; Performance

The changing medical curriculum from a process-based traditional didactic model to competency-based integrated model requires alignment of assessment with teaching and learning. The teaching and learning of pathology in undergraduate medical curriculum has been evolving over the last two decades which demands changes in the assessment methods [1]. Medical schools are continuously exploring methods to integrate basic sciences and clinical sciences for better understanding of the disease process and its clinical application [2]. ‘Assessment for learning’ demands ‘fit-for-purpose’, multi-modal and longitudinal assessment [3]. For a robust medical program, the assessment process should reflect the content of the curriculum and the teaching approaches used. Assessing observational skills and the clinical application of basic sciences is a valuable tool for learning pathology.

Bond University Medical program is a 4.8 year accelerated MD degree. First three years are pre-clinical and the last two years are clinical hospital rotations. The pathology syllabus in preclinical years is delivered through problem-based learning, didactic lectures, tutorials with macroscopic museum specimens, casebased workshops, and simulation at Bond Virtual Hospital. The relevant macroscopic pathology museum specimen’s areused in face-to-face sessions so that students can observe the macroscopic pathological changes in the three dimensions and correlate them with the pathophysiological disease process (Figure 1).

Figure 1:Pathology tutorial with museum specimens.

The macroscopic observational skills and the ability to identify microscopic histological features enable a doctor to understandthe relevance between pathological changes of a disease and its clinical symptoms and signs and help to derive a clinical diagnosis which guides patient management. Pathologists work closely with clinicians to deliver holistic patient care. For example, when students see a museum specimen of papillary urothelial carcinoma in bladder, they can associate it with a patient’s symptoms of hematuria and urinary frequency.

Well-structured clinical vignettes are used in association with multi-media such as anatomy models, videos, macroscopic museum specimens, laboratory reports and histopathology images to assess learner’s clinical reasoning skills. The IPA requires integration of knowledge and understanding of the disease process which can test the learner’s three dimensional observational skills of pathology [6], to which the students are exposed during their face-to-face pathology teachings. Answering questions related to the gross specimens allows for integration of basic sciences with clinical sciences which aids in clinico-pathological correlation skill useful in clinical practice.

At Bond University, Year 2 students undertake an IPA and a multi-disciplinary written exam at the end of each semester. To measure any difference in students’ performance between the written and practical assessment, this study presents the correlation between yearly cumulative performance of traditional written assessment and the new integrated practical assessment for 2015 Year 2 cohort. Students were de-identified and rankordered according to their yearly summative written and IPA score percentages. The cumulative raw scores over three semester exams for Year 2 (n=93) students were converted to percentages and rank ordered for boththe IPA and the written assessment.

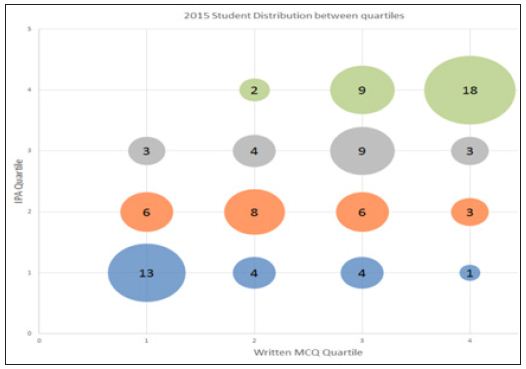

They were grouped into quartiles of 1, 2, 3 and 4 against scores 0-25%, 26-50%, 51-75% and 76-100%, respectively and rankordered. Using a combination of statistical packages: Microsoft Excel (Microsoft, Redmond, WA) and SPSS ver. 23 (SPSS Inc., Chicago, IL), Pearson’s correlation coefficient was calculated to find the strength of association between the two assessment modalities.

Assessment of learning ensures learners competency and evaluates the quality of training program [4]. Assessment also drives further learning [5]. Over the years, pathology has been assessed through oral, written and practical examinations. In previous curricula at Bond Medical program , pathology was examined as a separate entity through written paper consisting of multiple choice questions (MCQ’s), short answer questions (SAQ’s) and extended matching questions ( EMQ’s) which were recall questions not based on a clinical vignette.

In the current integrated examination, pathology is embedded within a clinical scenario, testing learners theoretical and practical application ability [1]. This helps to relate pathological processes to clinical problems through MCQs, SAQs, EMQs and objective structured practical examinations (OSPEs) and provide good face validity [6]. In 2015, along with a series of written papers (MCQ,SAQ, EMQ) students under took a clinical oriented integrated practical assessment(IPA)which is a hybrid of the ’old-spotter’ and the OSPE [7]. The Bond University IPA (Figure 2) is a time-based, sequential 50-station practical exam, blueprinted against the learning outcomes and held in a laboratory setting (Figure 2).

Figure 2:Year 2 Integrated Practical Assessment at Bond Medical School.

A 4x4 contingency table of quartile range was made to visualize the distribution of the IPA and written examination scores (Figure 3). Table 1 and Figure 3 highlights that 24 students scored better in IPA compared to 21 in written exam. The graph (Figure 3) shows that 18 students who did well quartile 4 in IPA were the same students who did well in the written and the 13 students who did poorly quartile 1 were the same in both assessment methods. This suggests that students in highest quartile 4 or lowest quartile1 maintained their performance irrespective of the assessment modality but students in mid-quartile 2 and 3 moved across.

Figure 3:Correlation of quartile rank order between the IPA and written examination for 2015 cohort.

Table 1:

Table in (Figure 3) shows 51.6 % (48/93) of students’ scores were not affected by assessment modality but it did affect the performance of the remaining 48.4 % (21+24) that either went up or down the quartile range when challenged with two different assessment methods. Figure 4 shows the positive Pearson’s correlation coefficient of percentage scores (r= 0.68, significant at > 0.01) between the two assessment methods. A scatter plot of two variables (IPA score % and written score %) shows the line of best fit is in the positive direction i.e. there is positive association between the two exams marks. Cronbach alpha is a measure of reliability [7] and a measure of0.7 which is closer to 1.0 suggests good reliability of our IPA exam.

Figure 4:Pearson correlation coefficient curve, r =0.68.

Our study shows that higher number of students (n=24) did better with IPA when compared to written exam (n=21). Cronbach alpha of 0.7 indicates as a reliable assessment tool. Smith et al. [7] study on robust assessment method for anatomy- Integrated Anatomy Practical Paper (IAPP) revealed consistently strong reliability coefficients ( Cronbach alpha) of up to 0.923 and suggested that IAPP is an integrated, relatively cost-effective and fit-for-practice tool for assessing anatomical knowledge and application.

The IPA was developed based on IAPP. The combination of wellstructured clinical vignettes and three dimensional observations of macroscopic specimen’s allowstesting of the visual-spatial ability and gives students ‘an experience of actual learning [8]. IPA helps to correlate structural pathology [5] to clinical symptoms and signs of a disease which fosters clinico-pathological correlation skills in students.

Jones et al. [9] concluded in their study that introduction of 3D printed anatomical models could be a disruptive technology to improve surgical education and clinical practice. This re-enforces that three dimensional learning and correlation can happen with real life museum specimens and not with 3D printed pathology images.

This study suggests IPA to be a reliable exam tool based on a single small cohort size (n=93). This indicates directions for further study by collecting data on more cohorts.Students perception on IPA is not included which would help in understanding its advantages and disadvantages. The cost -effectiveness and logistic of running IPA needs to be considered. Inability to the handle pathology specimen in pots hinders the tactile aspect of deeper learning.

A strong association(r = 0.68) between the two assessment methods is shown by the positive Pearson’s correlation curve. This suggests that the students’ performance in the IPA correlated well with the written assessment, so either could be used to predict their learning. Written assessment examines the theoretical knowledge and the IPA assesses the three-dimensional application of knowledge to understand the pathophysiology of a disease. Though it is small single cohort study, it suggests that IPA could bea reliable and feasible assessment tool to integrate basic sciences with clinical sciences. This study reassures that the pathology teaching methods are aligned with the assessment tools in our undergraduate medical program.

Authors would like to thank Mr. Luke de Beus, Faculty Assessment Assurance Officer for providing help with statistical analysis.